Most of the recent discoveries in particle physics are linked to the increase of the detector volume and / or granularity to observe complex phenomena that were inaccessible previously due to a lack of precision. This approach increases the available statistics and precision by multiple orders of magnitude which facilitate the detection of rare events, at the price of a significant increase of the number of channels. The challenge is that most of the standard techniques for reconstruction and triggering are not operative in such a context. For example, the energy threshold-based triggers fail to handle the complexity of the high pile-up collisions. The neural network methods are known to handle well the noisy and complex data inputs to deliver high level classification and regression. In particular, the convolution techniques Ref 1 have allowed outstanding improvement in the computer vision field. Unfortunately, they do not cope with the very peculiar topologies of the particle detectors and the irregular distribution of their sensors. Alternatives have been discovered to obtain the same classification power in that kind of non-euclidean environment, for example, the spatial graph convolution Ref 2 which applies adapted convolution kernels to the data represented as an undirected graph labeled by the sensor measurements. These techniques have proven to give excellent results on the particle detector data at LHC Ref 3 but also for neutrinos experiments Ref_4. They allow particle identification and continuous parameter regression, but also segmentation of entangled data which is a typical concern in secondary particle showers.

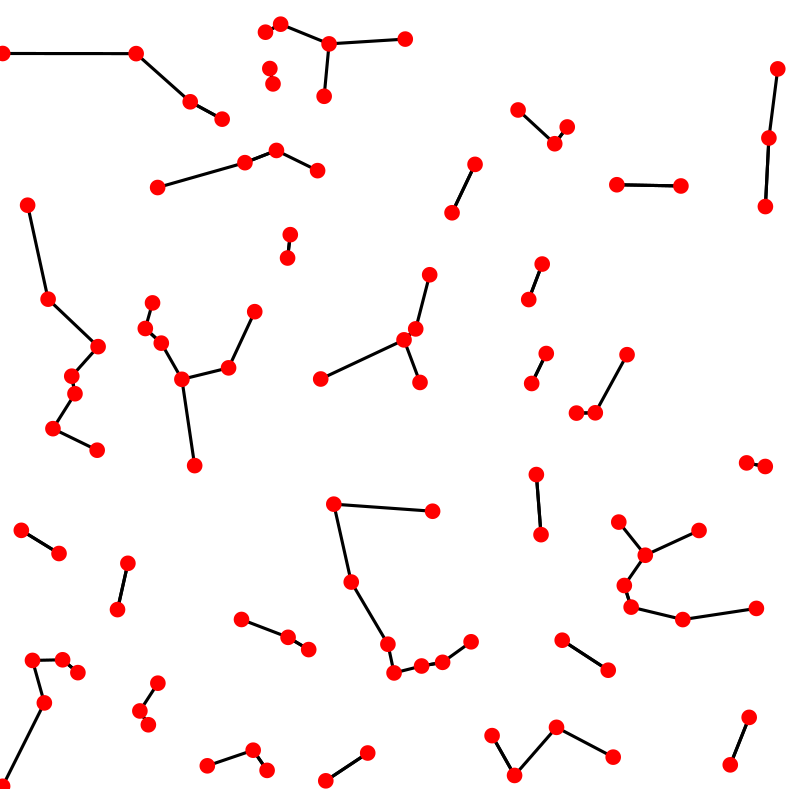

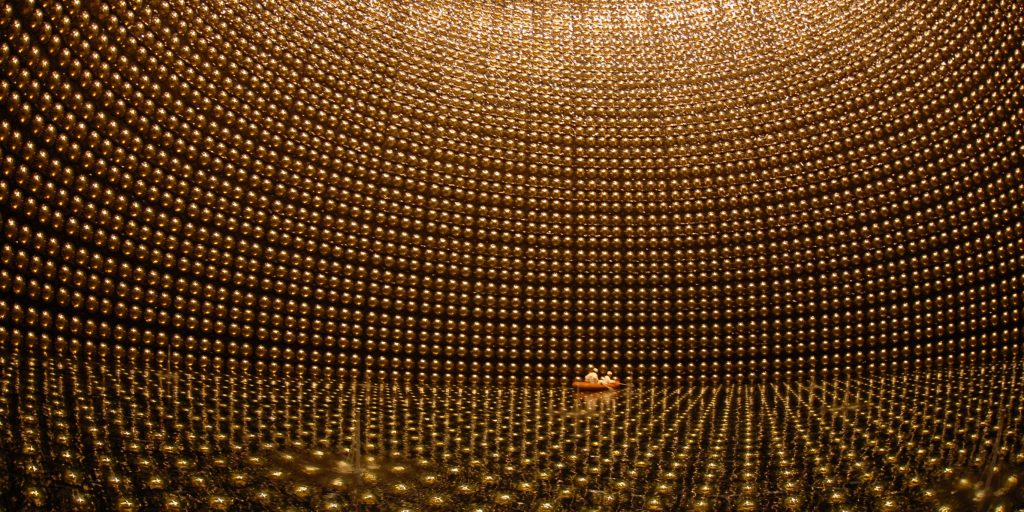

The operations that transform the data into a graph (K-nearest-neighbor algorithm, see Figure 1) are often very computationally expensive. In particular, all the techniques in which this operation is based on learned parameters (in the sense of machine learning) prevent the system from being used in a context where the computational time or latency are constrained (any triggering electronics, real-time data monitoring systems even offline systems with a too big data volume). For example, in the Super-Kamiokande neutrino experiment, a complex shape identifier would advantageously replace the current energy cut during the reconstruction phase that rejects many low energy events despite their physical interest. Another example is the future high-granularity endcap calorimeter (HGCal) of CMS Ref 5 for which it becomes crucial to be able to extract high level trigger primitives directly from the electronics to handle the complexity of the high luminosity collisions and take accurate triggering decisions. This is why, it is of utmost importance to design high-performance versions of these algorithms, which can increase the performance in all the constrained situations and allow their realization in the detectors.

The objective of this project is to develop and implement a new efficient selection algorithms for constrained computational environments by combining three main ideas

- Reducing the graph construction complexity by developing algorithms based on pre-calculated graph connectivity which would allow obtaining a linear complexity for the online part by exploiting intrinsic parallelism of the problem. This is made possible by the fixed positionning of the sensors in the particle detectors.

- Developing segmented version of graph convolution, allowing to distribute it over multiple computational unit (CPU, GPU, FPGA…). A typical segmentation is induced by the distribution of the data in the off-detector trigger devices and must be handled by partial graph convolution followed by a concentration phase.

- Optimizing the size and the nature of the convolution networks with advanced techniques of derivative-free optimization and adaptation to the electronic implementation.

These objectives will be declined in the three experiment contexts: Offline HGCal reconstruction, Online HGCal level 1 trigger and Super-Kamiokande reconstruction of the Diffused Supernova Neutrinos Background (DSNB).

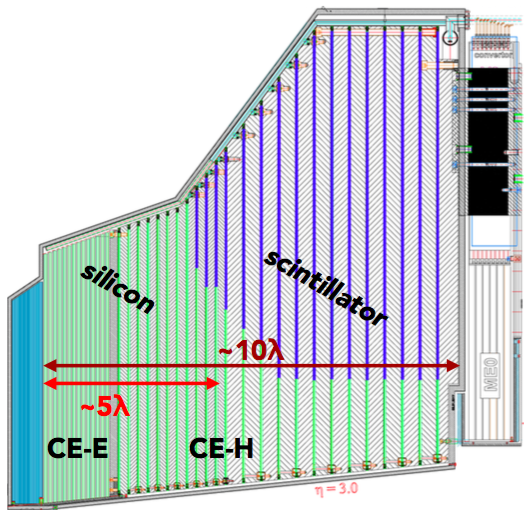

HGCal is the future front-end calorimeter of CMS (Figure 2). It is a Silicon-based very high granularity sampling calorimeter aiming at measuring energy, position and time-stamp of particles with 6.5 millions of channels from Si-based sensors Ref 5. It is a very ambitious project in terms of detection technology but also in terms of analysis software, because it generates huge amount of data describing very entangled events. These events has to be selected in the level 1 trigger with a very low latency (few micro-seconds).

The offline HGCal reconstruction is done by a complex system named TICL Ref 11 which is based on different layers of identification applied iteratively to recognize different kinds of particles (unambiguous electromagnetic showers, hadrons, minimum ionization particle…). These different iterations are implemented in a plugin system that to ease the test of alternative reconstruction techniques. The current system is a demonstrator based on legacy techniques, but not much effort could be dedicated to the performance. In particular, its discrimination power is not sufficient to implement particle flow algorithms. This is why it is necessary to try new techniques, including the graph convolution.

Even if the time constraint is not as important as in online systems, the collected data volume is so big with the new high granularity sub-detectors that it is of major importance to fully optimize this algorithms and this is why this project is completely in line with the need of the HGCal project. Another reason is that it would be very interesting to implement also this kind of techniques in the High Level Trigger (HLT) in which the latency is a major issue. There is a lot of advantages to use the same technique in both HLT and offline because it allows cross-developments and simplifies the downstream performance of the online algorithms.

The HGCal trigger is composed of different layers. In particular, the Level 1 (L1) trigger is implemented in the electronics and is providing trigger primitives to upper software layers (Central sub-detector triggers). The L1 trigger is composed itself of two layers of FPGA boards. The first layer is implementing a data reformating and send it to the second layer. There, a seeding operation is performed to identify the hot spots around which hits are related to the same particle. The hits are then clustered by radius around the seed. From theses hits collections, a particle identification and a regression on its energy are performed. This part is done with energy counting algorithms, with energy correction to compensate energy losses and pile-up contamination. This algorithm will become less efficient if the pile-up becomes too high. It has been demonstrated in Ref 3 that the Graph Convolution Neural Network (GCN) techniques would be really better in terms of identification but also that their actual implementation is not compatible with the resource available in a FPGA but also not compatible with the available latency in the triggering process Ref 10.

This is why it could be very valuable to fully optimize the algorithmical part of the convolution as well as the topology of the neural network implemented in the FPGA. An alternative would be to split the convolution in parallel parts on different FPGA. The current architecture of the HGCal trigger does no allow this but it is interesting to study it and provide pertinent arguments for an evolution.

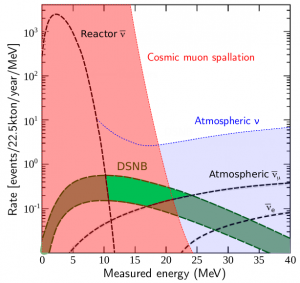

One of the objectives of the Super-Kamiokande (Figure 3) experiment is to observe the Diffuse Supernova Neutrino Background (DSNB) Ref 8. It is a flux of neutrinos cumulatively originating from all of the supernovae events which have occurred throughout the Universe. This is a very important cosmological probe, linked to the time evolution of the star production rate. Until now, it has never been observed because it is presently much smaller than the background. As shown in Figure 4, the DSNB spectrum competes with three different backgrounds: reactor production (dashed red line on the left) which is irreducible, cosmic muon spallation in pink and atmospheric neutrinos in blue. The spallation and the atmospheric neutrinos have very different signature than DSNB and could be eliminated by a shape detection technique, possibly GCN.

Indeed, the detection reaction in Super-Kamiokande involves an anti-neutrino interacting on a proton, producing a positron and a neutron. After thermalization, the neutron is captured by an atom (with 20% of efficiency in water, and currently 50% thanks to the addition of gadolinium). This capture on hydrogen or gadolinium induces the emission of a photon at 2.2 MeV or 8 MeV, interacting with electrons of the water or creating a pair electron/positron. This induces a particular signature in the image collected by the detector. If we can train a GCN to learn this particular shape, we could reduce the background significantly and thus reduce the energy threshold which is presently used. With these improvements, we hope to be able to measure the DSNB in the narrow but significant window colored in light green in Figure 4.